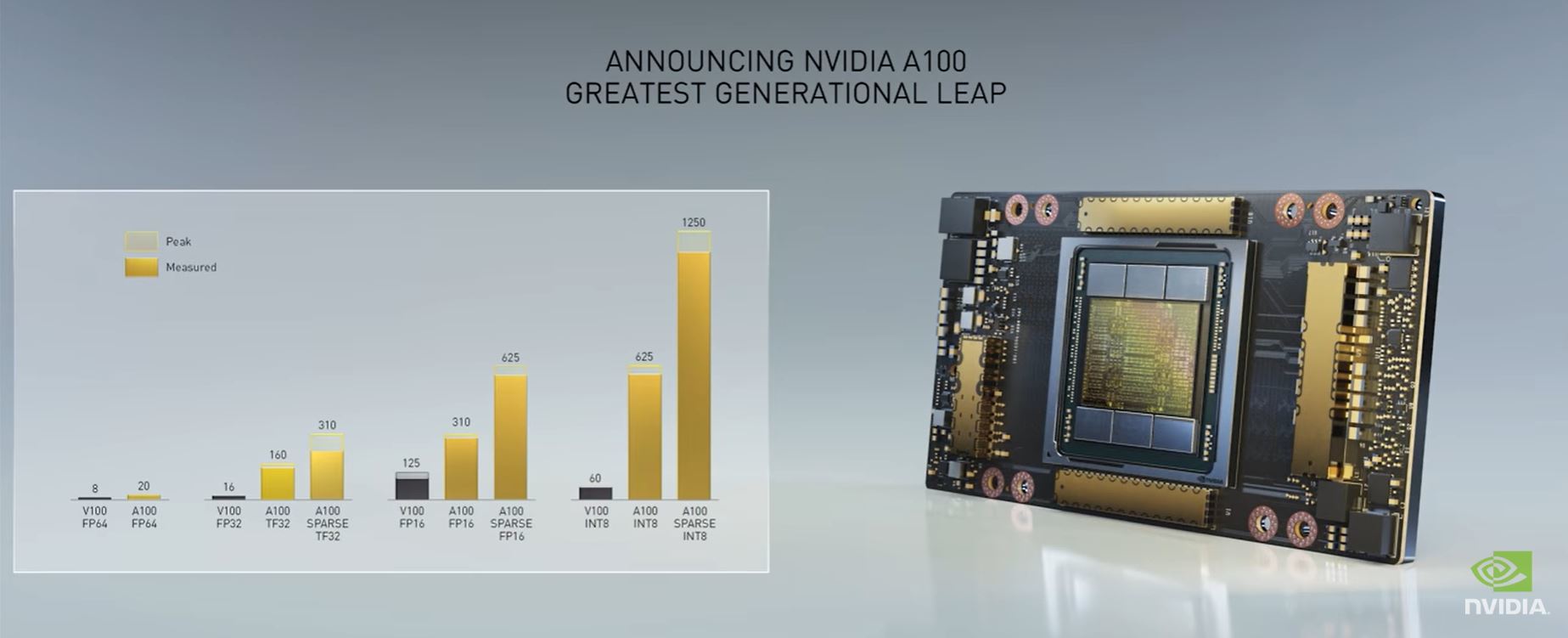

The double-precision FP64 performance is 9.7 TFLOPS, and with tensor cores this doubles to 19.5 TFLOPS. The single-precision FP32 performance is 19.5 TFLOPS and with the new Tensor Float (TF) precision this number significantly increases to 156 TFLOPS ~20x higher than the previous generation V100. Users looking to swiftly train their neural networks will greatly benefit from the A100 GPUs improved specs, as well as new features (such as TF32), which are further discussed below.įigure 4 – Training comparison for BERT TF32 and FP16 benchmarks A100 SpecificationsĪt the heart of NVIDIA’s A100 GPU is the NVIDIA Ampere architecture, which introduces double-precision tensor cores allowing for more than 2x the throughput of the V100 – a significant reduction in simulation run times. The A100 performed 5x faster than the V100 on the BERT TF32 benchmark, and 2.5x faster on the BERT FP16 benchmark. Users looking to process data and perform complex HPC calculations will benefit from reduced completion times when using the A100 GPU.įigure 3 – HPC comparison between A100 and V100 for GROMACS, NAMD, LAAMP and RTM benchmarks Trainingįigure 4 displays the performance improvement of the A100 over the V100 for two different training benchmarks – BERT Training TF32 and BERT Training FP16.

The A100 performed between 1.4x – 1.9x faster than the V100 for these benchmarks. This will translate to significant time reductions spent on inferring trained neural networks to classify and identify known patterns and objects.įigure 2 – Inference comparison between A100 and V100 for BERT and RN50 benchmarksįigure 3 displays the performance improvement of the A100 over the V100 for four different HPC benchmarks. The A100 performed 2.5x faster than the V100 on the BERT inference benchmark, and 5x faster on the RN50 inference benchmark. GPU will roll out on different Dell EMC next-gen server platforms over the course of H1 CY21.īenchmarking data comparing performance on various workloads for the A100 and V100 are shown below: Inferenceįigure 2 displays the performance improvement of the A100 over the V100 for two different inference benchmarks – BERT and ResNet-50. The A100 will be most impactful on PCIe Gen4 compatible PowerEdge servers, such as the PowerEdge R7525, which currently supports 2 A100s and will support up to 3 A100s within the first half of 2021. PowerEdge Support and Benchmark Performance This DfD will discuss the general improvements to the A100 GPU with the intention of educating customers on how they can best utilize the technology to accelerate their needs and goals. The A100 is the next-gen NVIDIA GPU that focuses on accelerating Training, HPC and Inference workloads. The performance gains over the V100, along with various new features, show that this new GPU model has much to offer for server data centers.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed